CERN hosts 4 of the biggest, most complex and momentous experiments in the world history. LHC (Large Hadron Collider) is one of the 4 and outputs a whopping total of 1 petabyte/sec data. Without the proficiency of united IT geniuses around the world this data would never enter the pipeline. Watch an impressive animation that represents how CERN’s big data are collected, processed, recorded and distributed.

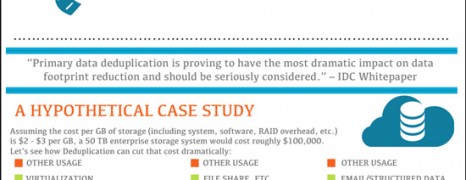

Deduplication Storage Savings

visualized by: tegile.com

IDC Whitepaper cites that primary data deduplication have the most dramatic impact on data footprint reduction. See why deduplication is becoming a vital practice in the present IT infrastracture and how it can improve server efficiency while cutting down cost.

The inspiration behind this exercise was the growing adoption of virtual desktop infrastructure (VDI) in enterprise business environments. Virtual server environments tend to have a very high level of redundancy, so deduplication can lead to large reductions in the amount of storage capacity required.

Knowledge in History

visualized by: Coveo.com

From primitive times when humans foraged and struggled for survival to our modern world with stellar technological advancements, man has developed various systems for recording, sharing and spreading knowledge. See the means that helped people to progress throughout history.

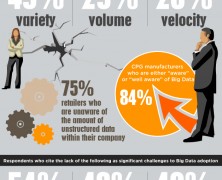

Big Data Gap

visualized by: Doctor Who?

How manufacturers and retailers see the big data management case? Do they realize the potential and the benefits? And how many of them have taken action to tap into the insights from data mining projects?

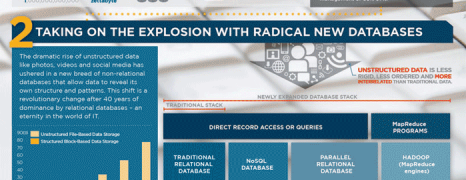

Big Data Management

visualized by: Computer Sciences Co.

Data volume is booming and specialists declare a 4300% increase in annual data generation by 2020. These facts are raising a point on how we plan to manage such figures and moreover how we will utilize the inherent intelligence.